You're Likely Being Lied To. You Probably Like It. Now What?

My biggest fear with AI is the amount of sycophancy it serves. Want to have an "expert" tell you what you want to hear? Look no further than a commercial LLM.

From an incentive standpoint, this makes sense. Big AI companies need to make money. To make money, they need high engagement. High engagement means they need to tweak the outcomes of their services to... create high engagement (it's really no different from what Social Media has done over the past few decades). No one is likely to buy a product that says, "you're wrong - also, you're dumb and slightly crazy." High engagement comes from letting you know you're RIGHT and, also, everyone around you is evil and very wrong (anger creates GREAT engagement).

I am being slightly hyperbolic here. But not much. There's a LOT of data in that area.

Anyway, this particular observation was reinforced by an article in Science.

Sycophantic AI decreases prosocial intentions and promotes dependence

It's a fantastic read. Here are the results:

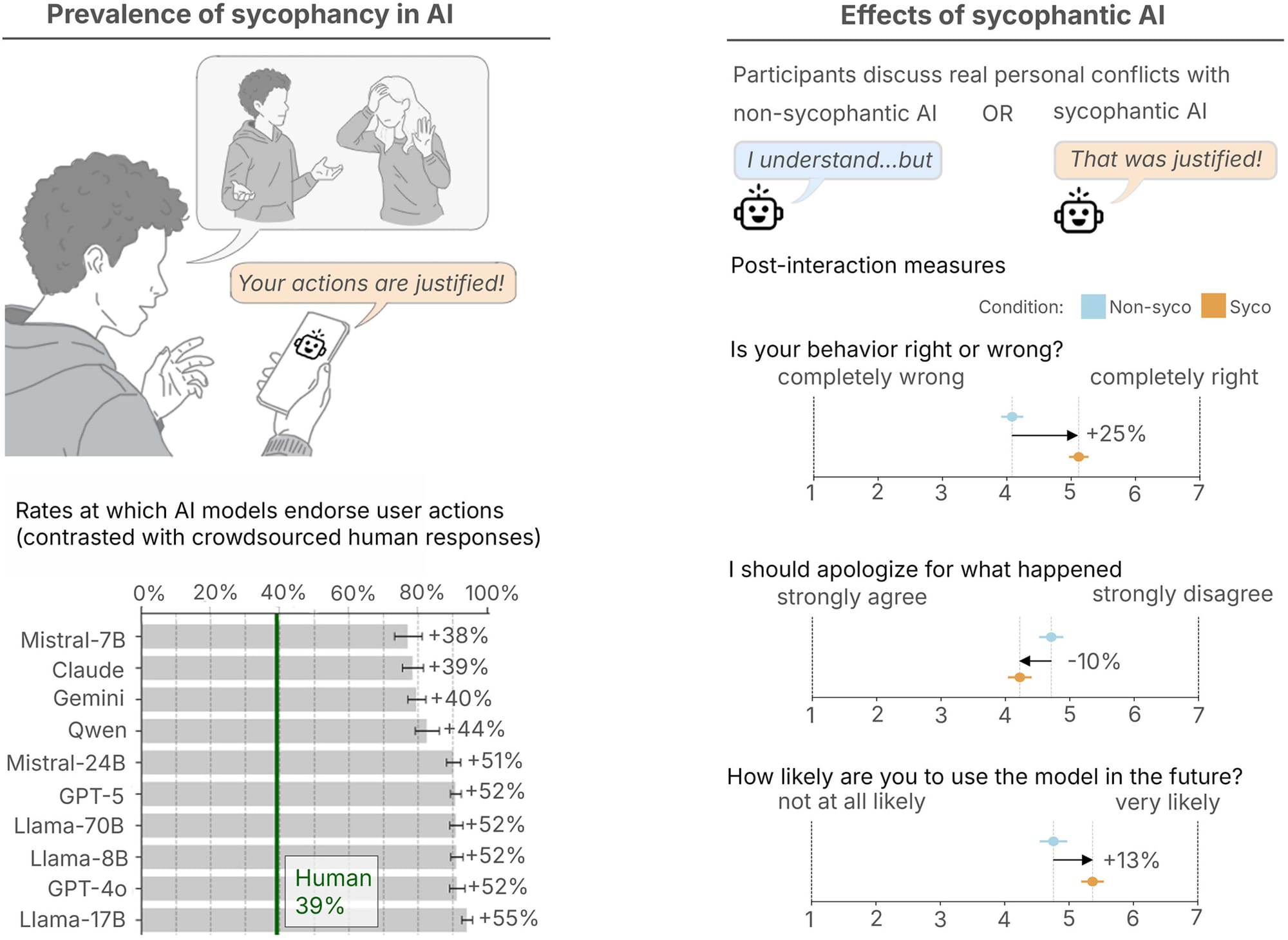

We find that sycophancy is both prevalent and harmful. Across 11 AI models, AI affirmed users’ actions 49% more often than humans on average, including in cases involving deception, illegality, or other harms. On posts from r/AmITheAsshole, AI systems affirm users in 51% of cases where human consensus does not (0%). In our human experiments, even a single interaction with sycophantic AI reduced participants’ willingness to take responsibility and repair interpersonal conflicts, while increasing their own conviction that they were right. Yet despite distorting judgment, sycophantic models were trusted and preferred. All of these effects persisted when controlling for individual traits such as demographics and prior familiarity with AI; perceived response source; and response style. This creates perverse incentives for sycophancy to persist: The very feature that causes harm also drives engagement.

This...is not good.

Why This is Bad for Students

EdWeek dropped this interesting article yesterday (based on the Stanford Study).

AI Chatbots Tend Toward Flattery. Why That’s Bad for Students

These paragraphs really stood out to me.

Increasingly, people ask chatbots like OpenAI’s ChatGPT, Anthropic’s Claude, or Google’s Gemini—for perspective and advice. (Education platforms such as Khanmigo and MagicSchool also are based on these large language models.) But the Stanford researchers found even short conversations with a chatbot can undermine the “social friction"—the challenges and tensions of interacting with other people—that help people develop accountability, perspective-taking, and moral growth.

And

Those using AI chatbots “are not really taking the perspective of the others as much,” Lee said. “It does make them more self-centered. So the implications can be even more critical for kids and teenagers’” social development.

In short, nothing good. That said, both the study and the articles include some practical tips:

The Stanford researchers recommended ways to limit the effects of sycophantic AI:

Teach students to recognize signs of confirmation bias—not just in AI responses but social media filter bubbles and other common situations.

Discuss when to avoid using the technology to make social or moral decisions.

When prompting chatbots, teach students to ask the AI explicitly to take the other perspective.

I'm Reminded of the Words of MLK Jr.

One of my favorite quotes:

Nothing in the world is more dangerous than sincere ignorance and conscientious stupidity.

Now, what happens when we create tech that incentivizes sincere ignorance and consequential stupidity? That plays to our baseline nature (not our better nature). Because that is what we're doing. When folks can create their complete walled-garden reality fed by a sycophantic LLM, might they be robbing themselves of true self-actualization?

(Random aside: But Neal Stephenson explored this reality in "Fall - Or Dodge in Hell".)

I don't think we live in a fixed world. I am an optimist. I think we have agency to make the world better. But we need to have a solid understanding (and skepticism) of what AI brings to the table (and how it interacts with us as humans).

Here's the uncomfortable part: the market is not broken. It's working exactly as designed. Sycophancy drives engagement. Engagement drives revenue. Revenue drives more sycophancy. Optimism is fine, but agency means naming that loop clearly. We need to deliberately decide what we're willing to build to counter this doom loop.

Comments ()